Building Production RAG: An End-to-End Implementation Guide

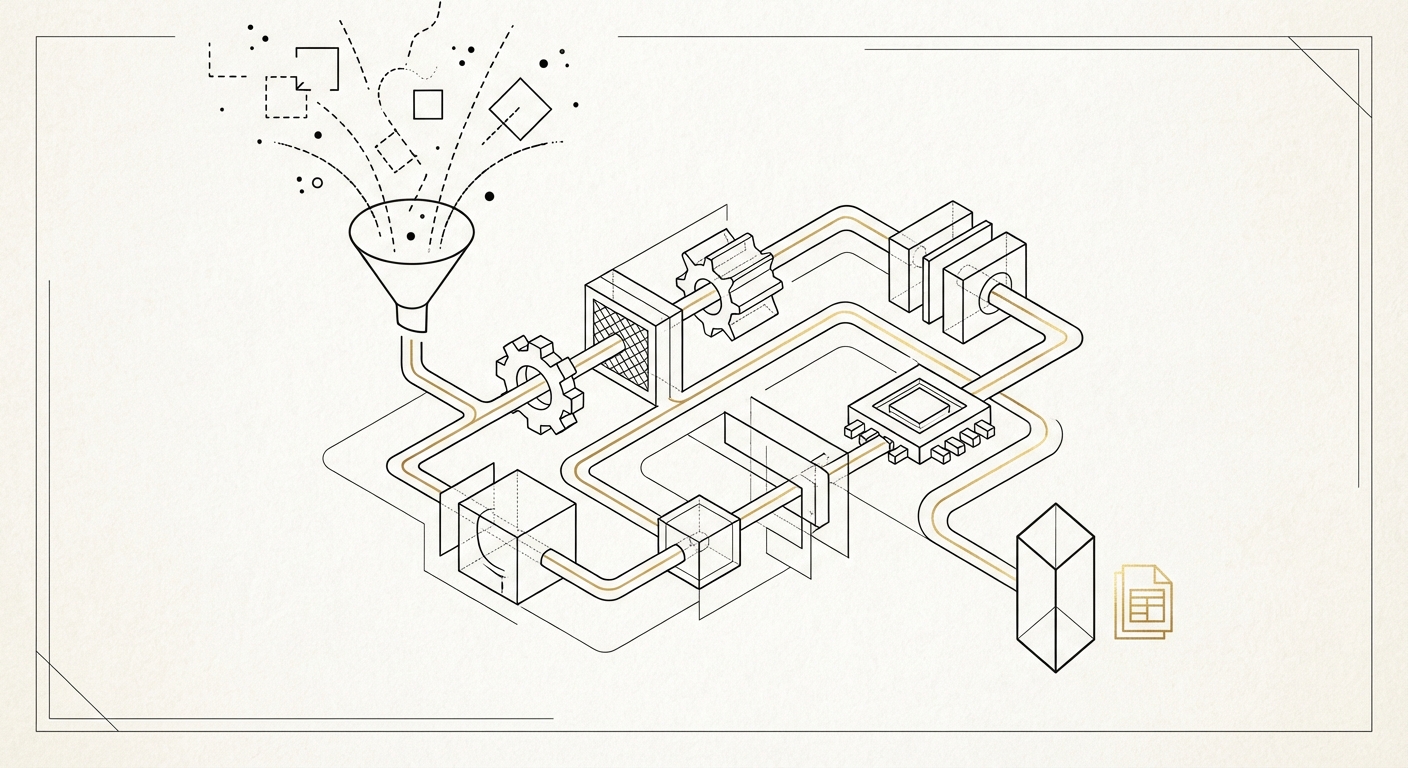

From Prototype to Production: What It Actually Takes Most RAG tutorials stop at “put documents in a vector …

For the last decade, the default answer to “where should we run this?” was the cloud. Elastic, pay-as-you-go, someone else’s problem. And for a while, that worked.

But AI workloads broke the model.

Training a frontier model costs hundreds of millions of dollars, but it happens a few times a year. Inference — actually running models in production — happens every second of every day. As of early 2026, inference accounts for 55% of AI cloud spending , projected to reach 70-80% by year-end. The inference-optimized chip market alone has crossed $50 billion. Hyperscaler capital expenditure is on track to hit $600 billion this year, with roughly 75% of that tied directly to AI.

These numbers tell a clear story: organizations are spending enormous sums running models at scale, and most of that spending is flowing through cloud providers. The question that used to be heretical is now just arithmetic — does it have to?

The organizations that are ahead of this curve aren’t choosing between cloud and on-prem. They’re running both, plus edge, with each tier handling what it’s best at. Deloitte’s 2026 Tech Trends analysis frames this as a three-tier hybrid model, and the economics support it.

The cloud is excellent at what it was always excellent at: variable workloads that need to scale up and down unpredictably.

For AI, that means:

The mistake is treating cloud as the default for everything. It’s the right choice for variable, unpredictable, or temporary workloads. It’s the wrong choice for steady-state production inference that runs 24/7 at predictable volume.

Here’s the tipping point that Deloitte identifies: on-premises deployment becomes more economical than cloud when cloud costs exceed 60-70% of the total cost of acquiring equivalent on-premises systems.

For inference workloads specifically, many organizations are already past this threshold. The math isn’t complicated. If you’re running a model continuously — handling thousands or millions of requests per day, every day — the cumulative cloud spend quickly eclipses the cost of owning the hardware.

A common trajectory: a model costs $200 per month to serve during development, then $10,000 per month in production as it scales to millions of queries. On cloud, that $10,000 per month is perpetual rent. On your own hardware, the equivalent compute is a capital expenditure that pays for itself within 12-18 months, after which your marginal cost is power, cooling, and maintenance.

Private infrastructure is the right choice for:

Inference costs have dropped 280-fold over the last two years. But usage has dramatically outpaced those reductions. Some organizations report monthly AI bills in the tens of millions of dollars. At that scale, the buy-vs-rent calculation tips decisively toward owning.

Some decisions can’t wait for a round trip to a data center.

Cloud inference typically delivers response times of 100-300 milliseconds. Edge deployments can achieve sub-10 millisecond latency. For applications where that difference matters — autonomous vehicles, industrial automation, real-time fraud detection, medical devices — edge isn’t optional. It’s the only architecture that works.

The edge AI market is projected to grow from $24.9 billion in 2025 to $66.5 billion by 2030. That growth is driven by use cases that are physically incompatible with centralized inference:

Edge also serves as a cost optimization layer. By filtering and pre-processing data locally, edge deployments can reduce cloud egress costs by up to 70%, sending only the data that actually needs centralized processing.

The decision about where to run a workload is ultimately a financial one. Here’s the framework.

Calculate your steady-state cloud cost. Take your monthly inference bill for a given workload and annualize it. Be honest about the trajectory — if usage is growing, project forward.

Price the equivalent on-prem. Include hardware, networking, facilities, power, cooling, and staff. Don’t forget the cost of capital. Include a realistic depreciation schedule (typically 3-5 years for AI hardware).

Find the crossover. If your annualized cloud cost exceeds 60-70% of the on-prem acquisition cost, you’re in the zone where private infrastructure is more economical. If you’re already spending more on cloud than the hardware would cost to buy outright, you’re past the crossover and burning money on rent.

Factor in growth. Cloud costs scale linearly with usage. On-prem costs scale in steps (you buy capacity in chunks). If your inference volume is growing rapidly, the cloud cost curve pulls away from the on-prem cost curve faster than most people expect.

Account for what you can’t price. Data sovereignty, latency requirements, competitive intelligence protection, and vendor independence all have value. They’re harder to put in a spreadsheet, but they’re real.

Most organizations find that a small number of high-volume inference workloads account for the majority of their cloud AI spending. Moving those specific workloads to private infrastructure — while keeping everything else on cloud — often delivers 40-60% cost reduction on their total AI compute bill.

The worst outcome is architecting yourself into a single tier. The goal is a system where workloads can move between tiers based on economics, performance requirements, and organizational needs.

This means:

Standardize on open model formats. If your inference stack depends on a proprietary model format or a single vendor’s serving infrastructure, you can’t move. ONNX, vLLM, and standard serving frameworks give you portability.

Abstract the inference layer. Your application shouldn’t know or care whether the model behind it is running on a cloud GPU, an on-prem server, or an edge device. A unified API layer that routes requests based on policy — latency, cost, data residency — gives you the flexibility to shift workloads without rewriting applications.

Build unified observability. You need the same metrics, logging, and monitoring regardless of where a workload runs. If your cloud inference has detailed telemetry but your on-prem deployment is a black box, you can’t make informed placement decisions.

Automate the placement decision. The most sophisticated organizations are moving toward automated workload placement — systems that evaluate cost, latency, utilization, and policy constraints, then route inference requests to the optimal tier in real time.

This isn’t a “cloud is dead” argument. The cloud is excellent for what it does well, and most organizations will continue to run significant AI workloads there. Training will stay predominantly cloud-based for all but the largest organizations. Experimentation and development workflows benefit enormously from cloud elasticity.

This also isn’t a “build your own data center” argument. Private infrastructure in 2026 ranges from a rack of inference-optimized servers in a colocation facility to a full-scale GPU cluster. The entry point is lower than people think, especially for inference, where the hardware requirements are more modest than training.

The argument is simpler: stop treating AI infrastructure as a single-tier decision. The economics, latency requirements, and data sensitivity of each workload should determine where it runs. For most organizations, that means some combination of all three tiers, with the mix shifting over time as workloads mature and volumes grow.

If you’re starting from a cloud-only position, the migration doesn’t have to be dramatic. The highest-impact first step is usually straightforward:

The organizations getting this right aren’t religious about any particular tier. They’re pragmatic about economics, clear-eyed about where their workloads actually need to run, and building the infrastructure flexibility to change their minds as conditions evolve.

The three-tier model isn’t a prediction. It’s what the cost curves are already demanding.

Calliope AI provides a unified AI development platform that runs on your infrastructure — cloud, on-prem, or hybrid. Your models, your data, your deployment topology.

From Prototype to Production: What It Actually Takes Most RAG tutorials stop at “put documents in a vector …

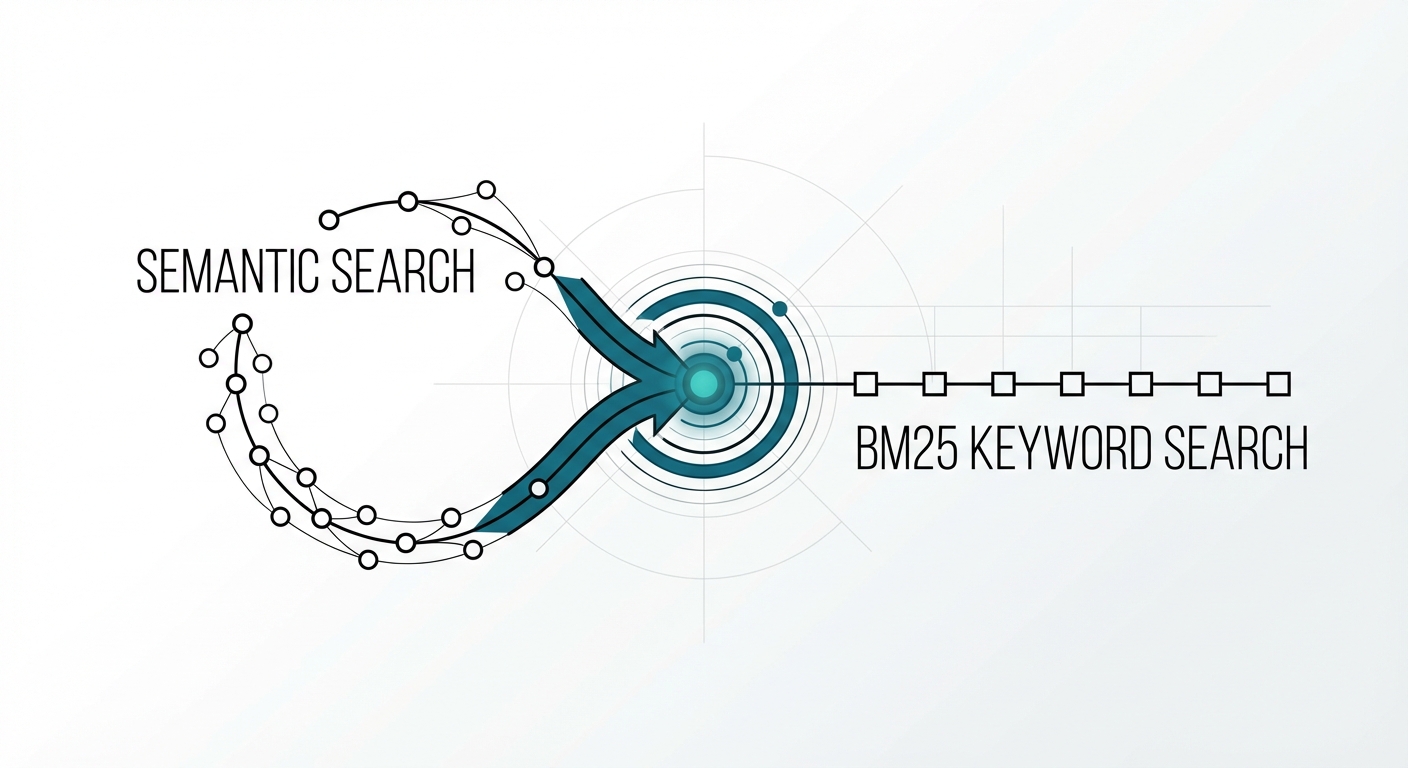

The Retrieval Problem No One Talks About You built a RAG system with a state-of-the-art embedding model. Semantic search …