Building Production RAG: An End-to-End Implementation Guide

From Prototype to Production: What It Actually Takes Most RAG tutorials stop at “put documents in a vector …

In late 2022, running inference at GPT-4-equivalent performance cost roughly $20 per million tokens. In early 2026, the same level of performance costs $0.40 per million tokens.

That’s a 1,000x reduction in just over three years.

Not 10x. Not 100x. A thousand-fold collapse in the cost of machine intelligence. And the curve hasn’t flattened — current projections suggest GPT-4-equivalent inference could hit sub-$0.01 per million tokens by 2028 if the trajectory holds.

This isn’t an incremental improvement. It’s a structural shift in what’s economically possible with AI. And most organizations are still planning around cost assumptions from 18 months ago.

When inference was expensive, you rationed it. You carefully selected which tasks justified an API call. You truncated context windows to keep costs down. You built elaborate caching layers to avoid redundant calls.

When inference costs drop by three orders of magnitude, rationing stops making sense. Instead, entirely new patterns become viable — patterns that were economically absurd two years ago.

At $20 per million tokens, running a monitoring agent 24/7 across your infrastructure was a budget line item that required serious justification. At $0.40, it’s a rounding error. You can have agents continuously watching your systems, parsing logs, flagging anomalies, and summarizing incidents — not as a batch job that runs nightly, but as a persistent presence.

The shift isn’t just about cost. It’s about latency of insight. An always-on agent catches the problem at 2:14 AM. A nightly batch job catches it at 6:00 AM, after four hours of cascading damage.

Why run one approach when you can run five? At current prices, you can afford to have multiple agents tackle the same problem from different angles and pick the best result. Generate three candidate implementations of a function, run tests against all of them, ship the one that passes. Draft four versions of a customer response, score them, send the best.

This is speculative execution — a concept borrowed from CPU design — applied to AI workflows. It trades cheap compute for better outcomes.

Instead of trusting a single model’s output, ask three. Compare the answers. Flag disagreements for human review.

This pattern was prohibitively expensive when frontier models charged $15+ per million input tokens. With mid-tier models at $0.50-$3 per million tokens and efficient models at $0.10-$0.25, running a three-model consensus pipeline costs less than a single frontier call did in 2023.

The implications for high-stakes domains — healthcare, finance, legal — are significant. Multi-model consensus doesn’t eliminate errors, but it dramatically changes the detection rate.

The old optimization: strip context to the minimum. Send only what’s absolutely necessary. Carefully curate every token in the prompt because you’re paying for each one.

The new approach: send more context, not less. Include the full file instead of the snippet. Attach the complete conversation history instead of a summary. Let the model see the whole picture. The marginal cost of a larger context window is now trivial compared to the cost of the model misunderstanding your intent because you starved it of information.

Retrieval-Augmented Generation used to be a cost optimization — a way to avoid fine-tuning by injecting relevant context at inference time. Now it’s just good architecture. When retrieval plus inference costs almost nothing, RAG becomes the default pattern for any knowledge-intensive application. You can afford to retrieve broadly, include generously, and let the model synthesize.

The inference market reflects this shift. It expanded from approximately $12 billion in 2023 to a projected $55 billion by 2026, with inference now representing roughly 67% of total AI compute demand — up from 33% in 2023. The industry has moved from a training-dominated era to an inference-dominated one.

Here’s the part that should make strategists uncomfortable: the current price collapse is not purely driven by efficiency gains.

Yes, the hardware is getting dramatically better. NVIDIA’s Blackwell architecture delivers up to 10x cost-per-token reduction compared to Hopper. Real-world deployments are seeing concrete results — healthcare inference platforms reporting 2.5x better throughput per dollar, gaming companies dropping costs from 20 cents to 5 cents per million tokens, customer service pipelines achieving 6x cost reduction per query.

But a significant portion of the current pricing landscape is subsidized. VC-backed inference providers are pricing below cost to capture market share. Hyperscalers are cross-subsidizing inference with cloud compute margins to lock in customers. Open-source model providers are racing to the bottom on API pricing to build developer ecosystems.

This is the classic land-grab phase of a new infrastructure market. And like every land grab, it ends.

The window is roughly 12-24 months. After that, consolidation happens. The subsidies dry up or get restructured. Prices normalize to something that reflects actual hardware amortization, energy costs, and sustainable margins.

Gartner projects worldwide AI spending will total $2.5 trillion in 2026 . That’s real money, and the companies spending it will eventually demand real unit economics from their providers.

This creates a genuine strategic dilemma: do you plan around current subsidized prices, or build for where costs normalize?

If you architect entirely around today’s API pricing, you’re building on someone else’s subsidy. When that subsidy ends — through price increases, rate limits, deprecation of cheap model tiers, or provider consolidation — your cost model breaks. Your always-on agents suddenly have a meaningful line item. Your speculative execution pipelines need to be re-justified.

If you plan only for normalized prices, you miss the window. Your competitors who built aggressively during the subsidy period have compounding advantages in workflow automation, institutional knowledge capture, and operational efficiency.

The answer, as with most strategic questions, is to do both — but deliberately.

The framework that makes sense for most organizations:

Own your inference infrastructure for predictable workloads. The tasks you know you’ll run every day — code assistance, document processing, monitoring, internal chat, RAG pipelines — should run on hardware you control. Self-hosted open-source models on your own GPU infrastructure (or dedicated cloud GPU instances) give you predictable costs that don’t shift when a provider changes their pricing page.

With Blackwell-class hardware reducing cost per token by up to 10x versus the previous generation, the economics of self-hosted inference are better than they’ve ever been. A dedicated inference cluster pays for itself quickly when you’re running millions of tokens per day on predictable workloads.

Use APIs for burst capacity and frontier capabilities. When you need the absolute best model for a complex reasoning task, or when you have an unpredictable spike in demand, API providers are the right tool. Pay the per-token rate, get the capability, move on. The key is that this is a conscious choice for specific use cases, not your default architecture.

This is the hybrid model: owned infrastructure for the base, API access for the peaks. It insulates you from price normalization on your core workloads while still letting you take advantage of subsidized pricing for variable demand.

If your organization is still in the “evaluate and pilot” phase of AI adoption, you’re already behind the cost curve. The organizations that are winning are the ones that treated the cost collapse as a signal to go deep, not a reason to wait for it to get even cheaper.

Concretely:

Audit your current AI spend and usage patterns. Understand which workloads are predictable (candidates for self-hosting) and which are variable (keep on APIs). Most organizations don’t actually know this — they’ve been on APIs for everything because that’s how they started.

Run the self-hosting math. Take your top three inference workloads by volume. Price out what it would cost to run them on dedicated infrastructure with open-weight models. In many cases, the break-even point is measured in weeks, not months.

Build for model portability. Whatever you build, abstract the model layer. The inference provider landscape will consolidate. Models will get replaced. If your application is tightly coupled to a specific model or provider, you’re accumulating technical debt that will come due.

Invest in the patterns that cheap inference enables. Multi-model consensus, speculative execution, always-on monitoring — these aren’t theoretical. They’re production patterns at companies that took the cost collapse seriously. The competitive advantage compounds over time.

The 1,000x cost collapse isn’t really about the money. It’s about what becomes thinkable when the marginal cost of intelligence approaches zero.

When inference was expensive, AI was a tool you reached for deliberately. When it’s nearly free, it becomes ambient — embedded in every process, every workflow, every decision. The organizations that understand this aren’t optimizing their AI spend. They’re redesigning their operations around the assumption that intelligence is cheap and abundant.

The cost curve will normalize eventually. The operational advantages built during the collapse won’t.

Calliope provides self-hosted AI development infrastructure — giving teams the tools to own their inference layer, control their costs, and build on ground that doesn’t shift.

From Prototype to Production: What It Actually Takes Most RAG tutorials stop at “put documents in a vector …

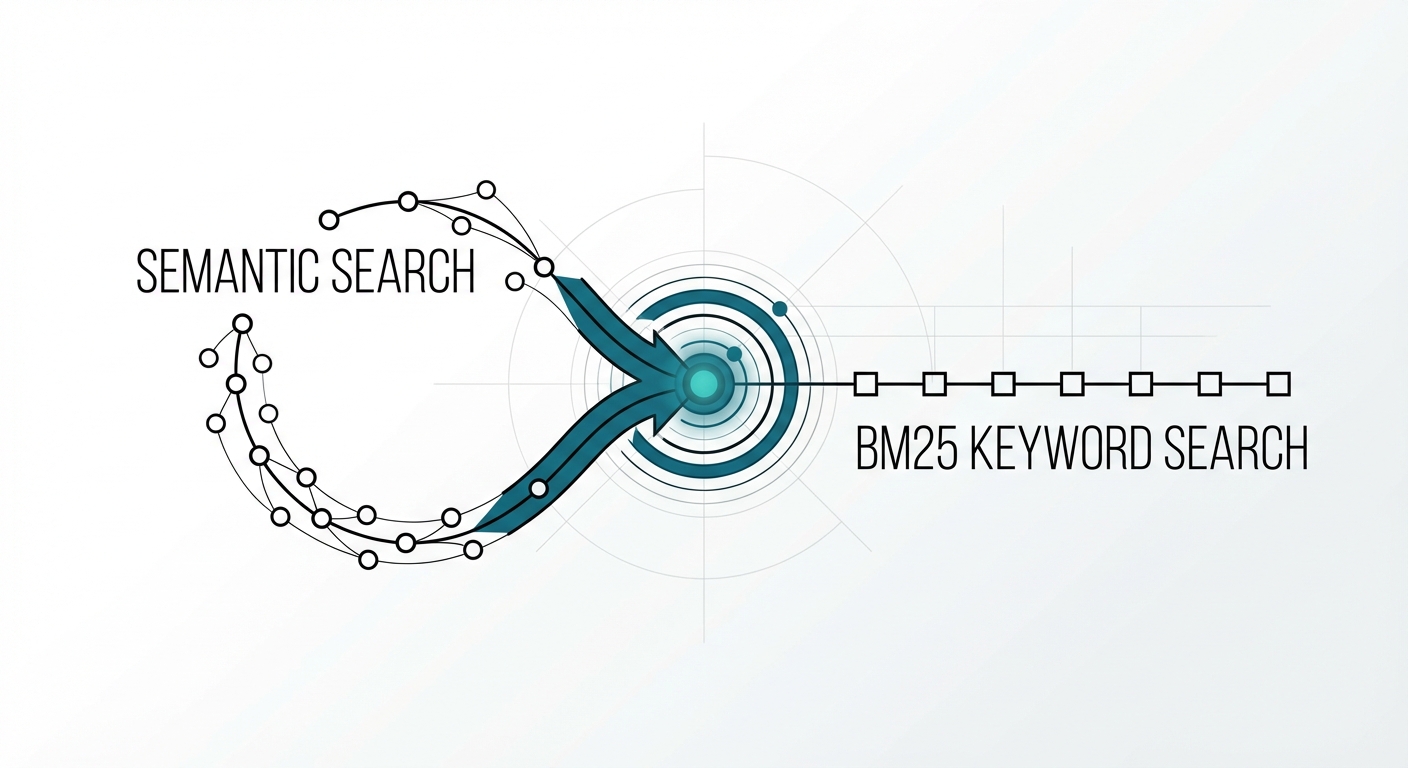

The Retrieval Problem No One Talks About You built a RAG system with a state-of-the-art embedding model. Semantic search …