Calliope IDE v1.4.0: Bedrock Support and Smarter Agents

What’s New in v1.4.0 Calliope AI IDE v1.4.0 is our biggest agent reliability release yet. This update brings full …

Every time your team uses a hosted AI coding assistant, something leaves the building.

Your source code. Your database schemas. Your business logic. Your proprietary algorithms. Your competitive advantage — tokenized, embedded, and sent to someone else’s servers.

The AI companies will tell you your data is safe. They’ll point to their privacy policies, their SOC 2 badges, their enterprise agreements with data retention clauses. And maybe they mean it today.

But here’s the question nobody in the room wants to ask:

What’s stopping them from using what they learn to compete with you?

This isn’t about whether OpenAI, Anthropic, Google, or anyone else is acting in bad faith right now. It’s about the structure of the relationship.

When you use a hosted AI assistant across your engineering organization, the provider has access to:

Now imagine you’re a board member at one of these AI companies. You’re sitting on aggregate intelligence about thousands of companies across every industry. You can see patterns in how entire sectors build software. You know which problems are being solved and which aren’t.

That’s not a privacy policy problem. That’s a strategic intelligence problem.

Every major AI provider has made some version of this promise. And most of them have carved out exceptions.

API usage policies differ from consumer product policies. Enterprise tiers differ from free tiers. Today’s policy differs from tomorrow’s policy after an acquisition, a leadership change, or a pivot.

Even setting aside training, the data still transits their infrastructure. It’s processed on their servers. Their employees — some subset of them — have access for debugging, safety review, or incident response. Their systems log things.

You are trusting that:

That’s a lot of trust to place in a company whose core business model is building the most capable AI possible — and whose investors expect exponential returns.

Here’s what people miss: individual data points aren’t the risk. The aggregate is.

An AI company doesn’t need to steal your code to threaten your business. If they process code from 10,000 companies in your industry, they develop an aggregate understanding of:

This intelligence is baked into the model weights, the product decisions, the internal roadmaps. It’s not “your data” anymore — it’s emergent knowledge derived from everyone’s data.

And when that AI company decides to launch a product in your vertical — or partners with your competitor, or gets acquired by your competitor — that aggregate intelligence walks right through the door.

This isn’t theoretical. We’ve seen this pattern before.

Cloud providers launched competing products after observing what their customers built on their platforms. App store operators cloned top-performing third-party apps. Search engines used search query data to identify and enter lucrative markets.

The playbook is well-established: provide infrastructure, observe what gets built on it, enter the most attractive markets yourself.

AI coding assistants are infrastructure. The most intimate kind. They don’t just host your application — they read every line of it.

The solution isn’t to stop using AI. It’s to stop sending your intelligence to someone else’s servers.

Run local models.

The state of local/self-hosted AI in 2026 is not where it was two years ago. Open-weight models are genuinely capable. The hardware requirements have dropped. The tooling has matured.

What running local gives you:

There is no trust problem because there is no third party. Your code, your business logic, your prompts — all of it stays on infrastructure you control. No privacy policies to parse. No exceptions to worry about. No aggregate intelligence pool you’re contributing to.

You choose what model to run. You fine-tune it on your codebase if you want. You decide when to update it. You’re not subject to surprise model changes that break your workflows or degrade quality on your specific use cases.

When your AI workflow runs on your infrastructure with open models, you can switch models, modify them, or run multiple models for different tasks. Your accumulated context and configuration belongs to you.

If you’re in a regulated industry — finance, healthcare, defense, government — running local solves the data residency question completely. Your data doesn’t leave your jurisdiction, full stop.

No per-token pricing that scales with your team’s adoption. No surprise bills when your engineers use the tool more than projected. Buy the hardware (or rent the GPU instances), run the model, done.

They don’t need to be the best model ever made. They need to be good enough for your use case.

For code completion, refactoring, test generation, and documentation — the tasks that make up 80% of AI-assisted development — open models running locally are more than capable. Models like Llama, Qwen, DeepSeek, Mistral and others are competitive on coding benchmarks and practical tasks.

For the remaining 20% — the genuinely hard reasoning tasks — you can make a deliberate choice about what to send externally, with full awareness of what you’re exposing. That’s different from piping everything through a third party by default.

The question isn’t “is GPT-4 better than a local model?” The question is: “is the marginal quality difference worth handing over your competitive intelligence?”

For most organizations, the answer is no.

A practical local-first AI development setup:

This isn’t all-or-nothing. It’s about making the default private and the exception conscious.

Quis custodiet ipsos custodes? — Who watches the watchmen?

In the current AI landscape, nobody does. You’re trusting AI companies to be disciplined stewards of the most detailed intelligence ever collected about how the world’s software gets built.

That’s not a reasonable trust assumption. It’s a hope.

The alternative is simple: keep your intelligence on your infrastructure, use open models that are accountable to you, and make conscious choices about what — if anything — you share with external providers.

Your code is your business. Stop uploading it to someone else’s.

Calliope is built for teams that want AI-powered development tools on their own infrastructure. Local models. Your data. Your control.

What’s New in v1.4.0 Calliope AI IDE v1.4.0 is our biggest agent reliability release yet. This update brings full …

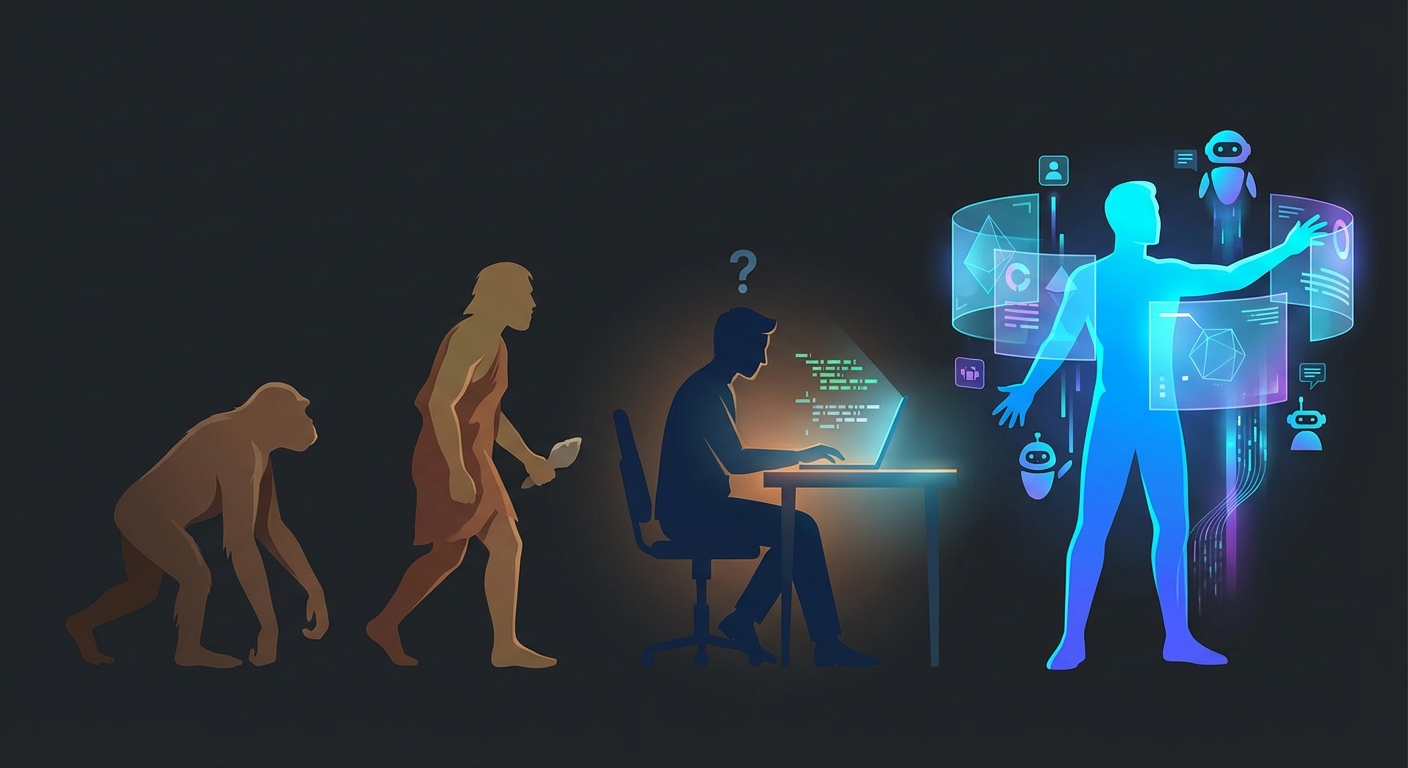

The Three Eras of AI-Assisted Development In less than four years, the way developers use AI has gone through three …