Building Production RAG: An End-to-End Implementation Guide

From Prototype to Production: What It Actually Takes Most RAG tutorials stop at “put documents in a vector …

You built a RAG system with a state-of-the-art embedding model. Semantic search works beautifully for conceptual questions. Then a user asks for error code ERR_CONN_REFUSED_4032 and the system returns results about network connectivity in general, completely missing the document that contains the exact error code.

Or someone searches for “SOC 2 Type II audit requirements” and gets results about security auditing concepts instead of the specific compliance framework.

This is the fundamental limitation of relying on a single retrieval method. Semantic search and keyword search fail in complementary ways. The solution is to stop choosing between them and use both.

Hybrid search combines BM25 (keyword-based) and vector (semantic) retrieval, fusing their results into a single ranked list. It is one of the highest-impact improvements you can make to a RAG pipeline, and it requires surprisingly little code.

Vector search encodes queries and documents into dense embeddings, then retrieves by cosine similarity. This captures meaning, not tokens. It handles synonyms, paraphrasing, and conceptual similarity well.

But dense embeddings struggle with several categories of queries:

Exact identifiers. Product SKUs, error codes, ticket numbers, API endpoint paths. The embedding for SKU-8841-BX carries almost no semantic signal. The model has never seen this token sequence during training, so it maps it to a generic region of the embedding space.

Boolean and structured queries. A user searching for “Python AND asyncio NOT threading” expects keyword logic. Semantic search treats this as a bag of concepts and may surface threading tutorials.

Low-frequency domain terms. Highly specialized jargon, internal acronyms, or newly coined terms may not have meaningful embedding representations. If the embedding model was not trained on your domain vocabulary, it cannot encode it well.

Frequency-based relevance. If a document mentions “Kubernetes” forty times and another mentions it once in passing, BM25 captures that signal. Semantic search does not—embeddings encode meaning, not term frequency.

BM25 (Best Matching 25) is a ranking function based on term frequency and inverse document frequency. It is the backbone of traditional search engines and has been in production systems for decades. It is fast, well-understood, and requires no GPU.

But BM25 has its own blind spots:

Synonyms and paraphrasing. A query for “how to terminate a process” will not match a document that only uses “kill a process” unless both terms appear. BM25 has no concept of meaning.

Conceptual similarity. “Best practices for deploying microservices” and “patterns for running distributed services in production” are semantically similar but share few keywords.

Multilingual retrieval. BM25 is language-bound. A query in English cannot match a relevant document in Spanish without explicit translation.

Vocabulary mismatch. Users and document authors often use different terminology for the same concept. BM25 requires lexical overlap that may not exist.

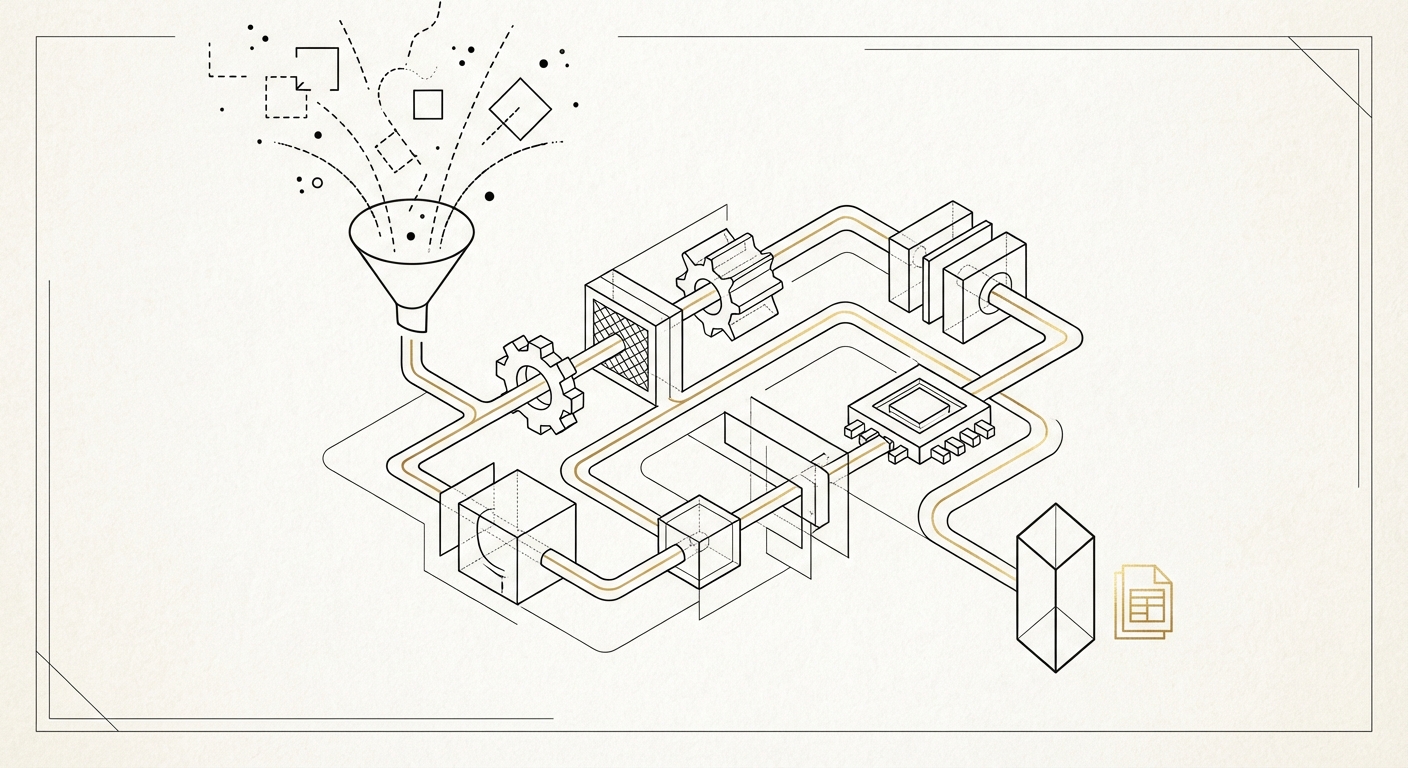

Hybrid search runs both retrieval methods in parallel and merges the results. The typical architecture looks like this:

User Query

|

+--> [BM25 Search] --> ranked_list_keyword (top K)

|

+--> [Vector Search] --> ranked_list_semantic (top K)

|

v

[Fusion Algorithm]

|

v

Final Ranked Results

Each retriever returns its own ranked list. A fusion algorithm combines these lists into a single ordering. The two most common fusion strategies are Reciprocal Rank Fusion (RRF) and weighted linear combination.

RRF is the simplest and most robust fusion method. For each document, it sums the reciprocal of its rank across all retrieval methods:

def reciprocal_rank_fusion(

ranked_lists: list[list[str]],

k: int = 60

) -> list[tuple[str, float]]:

"""

Combine multiple ranked lists using RRF.

Args:

ranked_lists: List of ranked document ID lists.

k: Smoothing constant (default 60, per the original paper).

Returns:

List of (doc_id, score) tuples sorted by fused score.

"""

fused_scores: dict[str, float] = {}

for ranked_list in ranked_lists:

for rank, doc_id in enumerate(ranked_list, start=1):

fused_scores[doc_id] = fused_scores.get(doc_id, 0.0) + 1.0 / (k + rank)

return sorted(fused_scores.items(), key=lambda x: x[1], reverse=True)

RRF has a useful property: it does not require score normalization. BM25 scores and cosine similarity scores live on completely different scales. RRF sidesteps this by using only rank positions. The constant k=60 controls how much weight top-ranked results get relative to lower-ranked ones. Higher values of k flatten the distribution.

When you want more control, normalize scores from each retriever and combine them with explicit weights:

import numpy as np

def normalize_scores(scores: list[float]) -> list[float]:

"""Min-max normalize scores to [0, 1]."""

arr = np.array(scores)

min_val, max_val = arr.min(), arr.max()

if max_val - min_val == 0:

return [0.5] * len(scores)

return ((arr - min_val) / (max_val - min_val)).tolist()

def weighted_fusion(

keyword_results: list[tuple[str, float]],

semantic_results: list[tuple[str, float]],

alpha: float = 0.5,

) -> list[tuple[str, float]]:

"""

Combine keyword and semantic results with weighted scoring.

Args:

keyword_results: List of (doc_id, bm25_score) tuples.

semantic_results: List of (doc_id, cosine_score) tuples.

alpha: Weight for semantic scores (1-alpha for keyword).

Returns:

List of (doc_id, fused_score) tuples sorted descending.

"""

# Normalize both score distributions

kw_ids, kw_scores = zip(*keyword_results) if keyword_results else ([], [])

sem_ids, sem_scores = zip(*semantic_results) if semantic_results else ([], [])

kw_norm = dict(zip(kw_ids, normalize_scores(list(kw_scores))))

sem_norm = dict(zip(sem_ids, normalize_scores(list(sem_scores))))

all_ids = set(kw_norm.keys()) | set(sem_norm.keys())

fused = []

for doc_id in all_ids:

kw_score = kw_norm.get(doc_id, 0.0)

sem_score = sem_norm.get(doc_id, 0.0)

combined = (1 - alpha) * kw_score + alpha * sem_score

fused.append((doc_id, combined))

return sorted(fused, key=lambda x: x[1], reverse=True)

The alpha parameter controls the balance. At alpha=1.0, you get pure semantic search. At alpha=0.0, pure keyword. The optimal value depends on your domain and query distribution.

Elasticsearch 8.x supports both BM25 and kNN vector search natively, making it one of the most practical choices for hybrid search.

from elasticsearch import Elasticsearch

def hybrid_search_elasticsearch(

es: Elasticsearch,

index: str,

query_text: str,

query_vector: list[float],

top_k: int = 20,

alpha: float = 0.5,

) -> list[dict]:

"""

Hybrid BM25 + kNN search using Elasticsearch 8.x.

"""

response = es.search(

index=index,

body={

"size": top_k,

"query": {

"bool": {

"should": [

{

"match": {

"content": {

"query": query_text,

"boost": 1 - alpha,

}

}

}

]

}

},

"knn": {

"field": "content_vector",

"query_vector": query_vector,

"k": top_k,

"num_candidates": top_k * 5,

"boost": alpha,

},

},

)

return [

{

"id": hit["_id"],

"score": hit["_score"],

"content": hit["_source"]["content"],

}

for hit in response["hits"]["hits"]

]

If your stack already uses PostgreSQL, you can implement hybrid search without adding another database. Combine pg_vector for semantic search with PostgreSQL’s built-in tsvector for BM25-style full-text search.

-- Schema: documents table with both vector and full-text indexes

CREATE TABLE documents (

id SERIAL PRIMARY KEY,

content TEXT NOT NULL,

embedding VECTOR(1536), -- pgvector column

content_tsv TSVECTOR -- full-text search column

);

-- Indexes

CREATE INDEX idx_embedding ON documents USING ivfflat (embedding vector_cosine_ops);

CREATE INDEX idx_content_tsv ON documents USING gin (content_tsv);

-- Keep tsvector in sync

CREATE TRIGGER tsvector_update BEFORE INSERT OR UPDATE ON documents

FOR EACH ROW EXECUTE FUNCTION tsvector_update_trigger(content_tsv, 'pg_catalog.english', content);

import psycopg2

import numpy as np

def hybrid_search_postgres(

conn,

query_text: str,

query_embedding: list[float],

top_k: int = 20,

alpha: float = 0.5,

) -> list[dict]:

"""

Hybrid search using PostgreSQL with pgvector + full-text search.

Uses RRF to combine results from both retrieval methods.

"""

cursor = conn.cursor()

# BM25-style full-text search

cursor.execute(

"""

SELECT id, content, ts_rank_cd(content_tsv, plainto_tsquery('english', %s)) AS rank

FROM documents

WHERE content_tsv @@ plainto_tsquery('english', %s)

ORDER BY rank DESC

LIMIT %s

""",

(query_text, query_text, top_k),

)

keyword_results = cursor.fetchall()

# Semantic search via pgvector

cursor.execute(

"""

SELECT id, content, 1 - (embedding <=> %s::vector) AS similarity

FROM documents

ORDER BY embedding <=> %s::vector

LIMIT %s

""",

(query_embedding, query_embedding, top_k),

)

semantic_results = cursor.fetchall()

# Fuse with RRF

keyword_ranked = [row[0] for row in keyword_results]

semantic_ranked = [row[0] for row in semantic_results]

fused = reciprocal_rank_fusion([keyword_ranked, semantic_ranked])

# Build result set

all_docs = {row[0]: row[1] for row in keyword_results + semantic_results}

return [

{"id": doc_id, "score": score, "content": all_docs.get(doc_id, "")}

for doc_id, score in fused[:top_k]

]

The right alpha value is not universal. It depends on your query distribution and document corpus. Here are practical guidelines based on query type.

Favor keyword search (alpha 0.2-0.4) when:

Favor semantic search (alpha 0.6-0.8) when:

Equal weighting (alpha ~0.5) when:

Empirical tuning process:

from dataclasses import dataclass

@dataclass

class EvalQuery:

query: str

expected_doc_ids: list[str]

def evaluate_alpha(

eval_queries: list[EvalQuery],

search_fn,

alpha_values: list[float],

) -> dict[float, float]:

"""

Evaluate retrieval quality across alpha values.

Returns {alpha: mean_recall@10} mapping.

"""

results = {}

for alpha in alpha_values:

recalls = []

for eq in eval_queries:

retrieved = search_fn(eq.query, alpha=alpha, top_k=10)

retrieved_ids = {r["id"] for r in retrieved}

expected = set(eq.expected_doc_ids)

recall = len(retrieved_ids & expected) / len(expected) if expected else 0

recalls.append(recall)

results[alpha] = sum(recalls) / len(recalls)

return results

# Usage

alpha_sweep = [0.0, 0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, 0.8, 0.9, 1.0]

scores = evaluate_alpha(eval_set, my_hybrid_search, alpha_sweep)

best_alpha = max(scores, key=scores.get)

Build an evaluation set of 50-100 queries with known relevant documents. Sweep alpha from 0 to 1 in increments of 0.1. Measure recall@10 and nDCG. The optimal alpha typically lands between 0.3 and 0.7 for most domains.

A static alpha is a good starting point, but production systems can do better. Different queries within the same system benefit from different balances.

import re

def classify_query(query: str) -> float:

"""

Return alpha value based on query characteristics.

Higher alpha = more semantic weight.

"""

# Exact identifier patterns: product codes, error codes, IDs

if re.search(r'[A-Z]{2,}-\d{3,}', query) or re.search(r'(?:error|code|id|sku)\s*[:=#]\s*\S+', query, re.I):

return 0.2 # Favor keyword

# Quoted exact phrases

if '"' in query or "'" in query:

return 0.3

# Short queries (1-2 words) are likely keyword searches

if len(query.split()) <= 2:

return 0.3

# Natural language questions

if query.strip().endswith('?') or query.lower().startswith(('how', 'what', 'why', 'when', 'where', 'explain', 'describe')):

return 0.7 # Favor semantic

# Default balanced

return 0.5

This is a simple heuristic, but it covers the most common failure modes. You can extend it with a lightweight classifier trained on your query logs.

Latency. Running two retrieval methods adds latency. Mitigate this by running BM25 and vector search in parallel. Both calls are independent—use async or threading. In practice, BM25 is fast enough (single-digit milliseconds) that it rarely becomes the bottleneck.

Index maintenance. You now maintain two indexes: an inverted index for BM25 and a vector index for embeddings. When documents are added or updated, both must be kept in sync. Use a single ingestion pipeline that writes to both.

Embedding model selection. Not all embedding models perform equally in hybrid settings. Models trained with hard negatives (e.g., BGE, GTE, E5) tend to produce embeddings that complement BM25 well because they learn to encode semantic nuances that keyword search misses.

Re-ranking. Hybrid retrieval is a first-stage retriever. For highest quality, add a cross-encoder re-ranker on the fused results. The hybrid retriever casts a wide net; the re-ranker applies fine-grained relevance scoring. This two-stage pattern (hybrid retrieve then re-rank) is the current state of the art for production RAG.

Query --> [Hybrid Retrieval: top 50] --> [Cross-Encoder Re-rank: top 10] --> LLM Context

Calliope AI’s platform supports hybrid retrieval patterns out of the box. The AI Workbench provides the compute environment to build, tune, and evaluate hybrid search pipelines. Use JupyterLab notebooks to experiment with alpha values, run evaluation sweeps, and prototype fusion strategies. When the pipeline is production-ready, deploy it as a service directly from the platform—no infrastructure overhead, no separate search cluster to manage.

Pure semantic search and pure keyword search both leave retrieval quality on the table. Hybrid search picks up what each method drops:

The implementation is straightforward. If you already have a vector database and a search index, adding hybrid retrieval is a matter of running both queries and merging the results. Start with RRF and alpha=0.5, build an evaluation set, then tune from there.

For most RAG systems, hybrid search is the single biggest retrieval improvement available—and it takes an afternoon to implement.

Sources:

From Prototype to Production: What It Actually Takes Most RAG tutorials stop at “put documents in a vector …

The Numbers Are Real In late 2022, running inference at GPT-4-equivalent performance cost roughly $20 per million …