Building Production RAG: An End-to-End Implementation Guide

From Prototype to Production: What It Actually Takes Most RAG tutorials stop at “put documents in a vector …

Here’s the situation most compliance teams are living in right now: the EU AI Act’s high-risk requirements hit in August. Colorado’s AI Act takes effect in February 2026. Texas passed SB 1993. California is pushing transparency requirements for automated decision-making. HIPAA enforcement is tightening around AI-assisted clinical workflows. SOC 2 auditors are asking about model governance for the first time.

And that’s just the short list. Gartner projects that AI-specific regulation will quadruple by 2030 , extending to 75% of the world’s economies. One in four compliance audits this year will include specific AI governance inquiries. The regulatory surface area is expanding faster than any compliance team can staff for.

The traditional response — hire more analysts, buy more GRC software, run more manual reviews — doesn’t scale. You can’t throw headcount at a problem that multiplies every time a new jurisdiction publishes a draft framework.

So teams are starting to do the obvious thing: use agents to do the compliance work itself.

The same technology creating the compliance burden is also the most capable tool for managing it.

AI agents can read regulatory text, classify systems against risk frameworks, generate documentation, monitor audit logs, flag violations, and produce reports — continuously, across jurisdictions, at a speed no human team can match. The irony is real, and it’s also practical. If you need to assess 200 AI systems against the EU AI Act’s risk classification taxonomy, you’re not doing that with a spreadsheet and a legal intern. You’re doing it with an agent that understands the regulation and can systematically evaluate each system.

But the irony cuts both ways. The agents doing your compliance work are themselves AI systems that need to be governed. If your compliance automation isn’t auditable, explainable, and controlled, you’ve just moved the problem one layer deeper.

This isn’t theoretical. Teams are running these patterns in production right now. Here’s what the concrete workflows look like.

The EU AI Act requires organizations to classify their AI systems by risk level — unacceptable, high, limited, or minimal — and apply corresponding obligations. For high-risk systems, that means conformity assessments, technical documentation, risk management systems, data governance practices, human oversight mechanisms, and post-market monitoring.

Doing this manually for every AI system in a large organization is a multi-month project. An agent workflow handles it differently:

The output isn’t a final answer. It’s a structured first pass that takes the workload from months to days and lets your compliance team focus on judgment calls instead of data gathering.

Healthcare organizations running AI in clinical workflows face a specific problem: HIPAA requires audit trails for every access to protected health information, and AI systems generate a volume of access events that manual review can’t keep up with.

Agent-based audit review works like this: an agent ingests audit logs from your AI systems — every prompt, every response, every data access event — and evaluates each entry against HIPAA access rules. It flags anomalies: a model accessing patient records outside of a clinical context, a user querying for data they shouldn’t have access to, PHI appearing in model outputs that should have been redacted.

The critical detail is that the agent doesn’t just flag violations after the fact. It can operate in near-real-time, catching issues within minutes instead of discovering them during a quarterly audit review. The difference between “we found this in our logs six months later” and “we detected and responded to this within the hour” is the difference between a regulatory finding and a documented, controlled response.

SOC 2 compliance traditionally operates on an audit cycle: you prepare, the auditors come in, they review your controls, they issue a report. The problem with AI systems is that they change constantly — model updates, configuration changes, new data sources, shifting usage patterns. Point-in-time audits miss drift.

Agents can run SOC 2 control monitoring continuously. They verify that access controls are enforced, that logging is functioning, that encryption is applied where required, that change management processes are followed. When a control drifts out of compliance, the agent generates an alert and a remediation recommendation. When auditors arrive, the agent produces a continuous compliance report covering the entire audit period — not a snapshot of the week before the audit.

Policy documents are useful. Policy enforcement is what matters. Most organizations have acceptable use policies for AI that exist in a wiki somewhere and get enforced through hope and good intentions.

Agent-based enforcement turns policies into active controls. Content scanning agents can evaluate prompts and responses against data handling policies in real time — catching sensitive data before it reaches a model, flagging outputs that contain proprietary information, blocking interactions that violate use-case restrictions. Policy inheritance systems can propagate rules from organizational policies down to specific teams, projects, and model deployments automatically.

This isn’t about restricting what people can do. It’s about making the policies you already wrote actually function as controls instead of aspirations.

There are currently over 1,600 AI-related legislative actions tracked globally, according to SecurePrivacy’s 2026 analysis . That number is growing. No compliance team can manually monitor regulatory developments across every jurisdiction they operate in.

Agent-based regulatory monitoring scans legislative databases, regulatory agency publications, and legal analysis sources continuously. When a new regulation is proposed, amended, or enacted, the agent evaluates it against your current compliance posture: does this affect us? Which systems are in scope? What’s the timeline? What do we need to change?

The output is a structured impact assessment, not a news alert. The difference matters. A news alert tells you something happened. An impact assessment tells you what it means for your specific organization and what you need to do about it.

When an auditor asks for evidence that your AI systems comply with a specific regulation, the typical response is weeks of scrambling — pulling logs from different systems, cross-referencing access records, assembling documentation from scattered sources.

Agents can maintain audit-ready evidence packages continuously. Every model interaction logged. Every policy evaluation recorded. Every compliance check timestamped and stored. When the audit request comes in, the agent assembles the evidence package against the specific regulatory requirements being audited.

This turns audit preparation from a fire drill into a query.

Here’s where most organizations get it wrong: they deploy compliance agents without governing the compliance agents themselves.

If your AI-powered audit review system isn’t itself auditable, you have a problem. If your automated risk classifier makes a wrong call and there’s no record of how it reached that conclusion, you’ve created a new risk while trying to mitigate an old one.

Agent-driven compliance systems need the same controls as any other AI system:

This is the part that separates a functioning compliance automation system from an expensive liability. The governance layer isn’t optional. It’s the whole point.

The architecture that works has three layers:

Layer 1: Execution. Agents doing the work — scanning logs, classifying systems, generating documentation, monitoring regulatory changes. This is where the speed and scale come from.

Layer 2: Governance. Controls on the agents — audit trails, policy enforcement, access restrictions, approval workflows. Every action the agent takes is logged, every decision is explainable, every sensitive operation requires human sign-off.

Layer 3: Oversight. Human review of agent outputs, exception handling, judgment calls on edge cases, and periodic validation that the agents are performing correctly. This is where compliance expertise lives.

Calliope builds this stack natively. Audit logging captures every AI interaction across the platform. Policy inheritance propagates governance rules from the organization level down to individual agent deployments. Content scanning catches sensitive data before it moves through the system. The compliance agents and the systems they monitor operate under the same governance framework — which is the only architecture that makes sense when AI is both the subject and the tool of compliance.

The compliance burden is going to keep growing. More jurisdictions, more regulations, more audit requirements, more documentation obligations. The number of AI systems in your organization is also going to keep growing. More models, more use cases, more data flows, more risk surface.

These two curves are diverging. Manual compliance processes can’t close the gap. The organizations that figure out agent-driven compliance — with proper governance over the agents themselves — will operate in the regulatory environment that’s coming. The ones that don’t will spend an increasing share of their resources on compliance work that doesn’t scale, and they’ll still miss things.

The tools exist. The patterns are proven. The question is whether your compliance stack is ready for a world where the volume of regulatory obligations grows faster than your headcount ever will.

Sources: Gartner, “Global AI Regulations Fuel Billion-Dollar Market for AI Governance Platforms,” February 17, 2026 . SecurePrivacy, “AI Risk Compliance 2026” .

From Prototype to Production: What It Actually Takes Most RAG tutorials stop at “put documents in a vector …

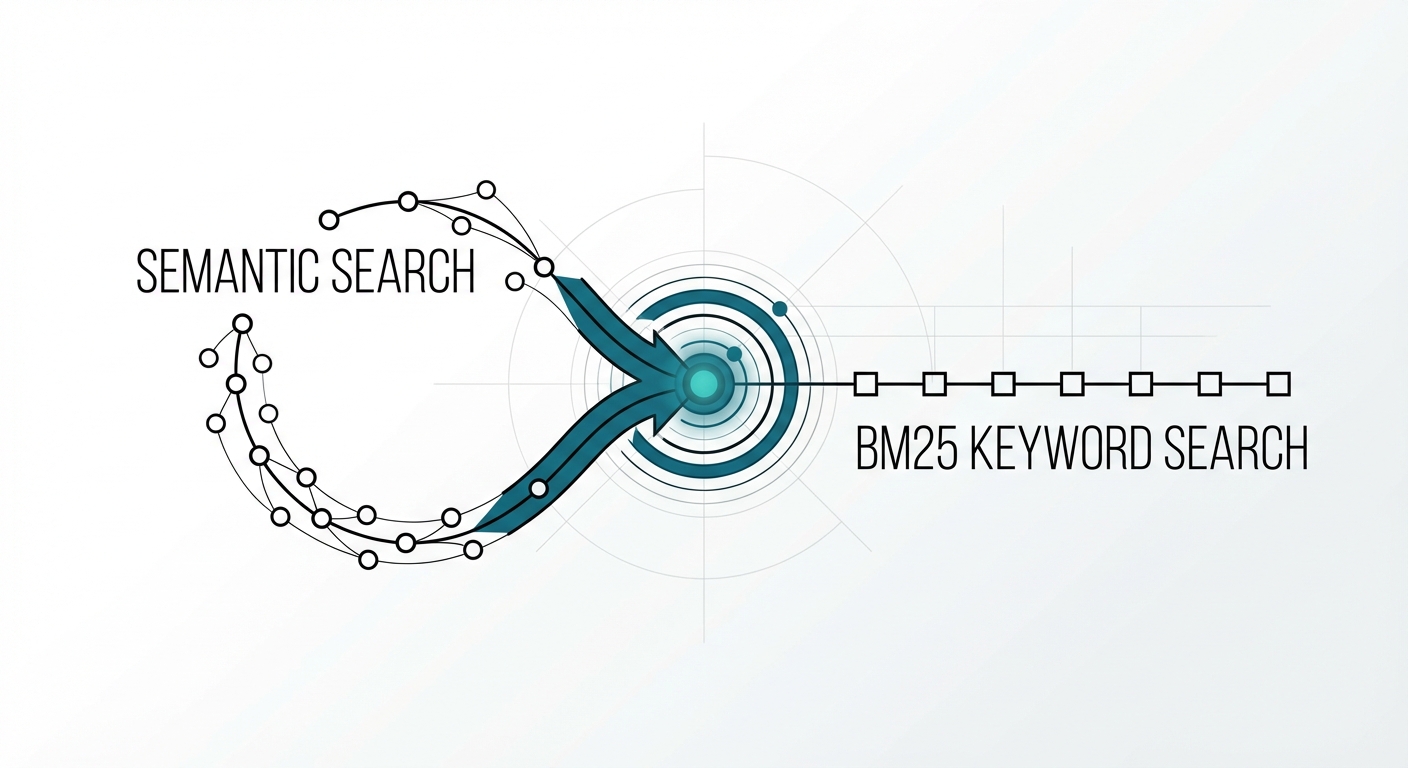

The Retrieval Problem No One Talks About You built a RAG system with a state-of-the-art embedding model. Semantic search …